Your machine learning models can only be as good as the data that feeds them. Before investing in sophisticated algorithms, invest in understanding what your data can—and can’t—support.

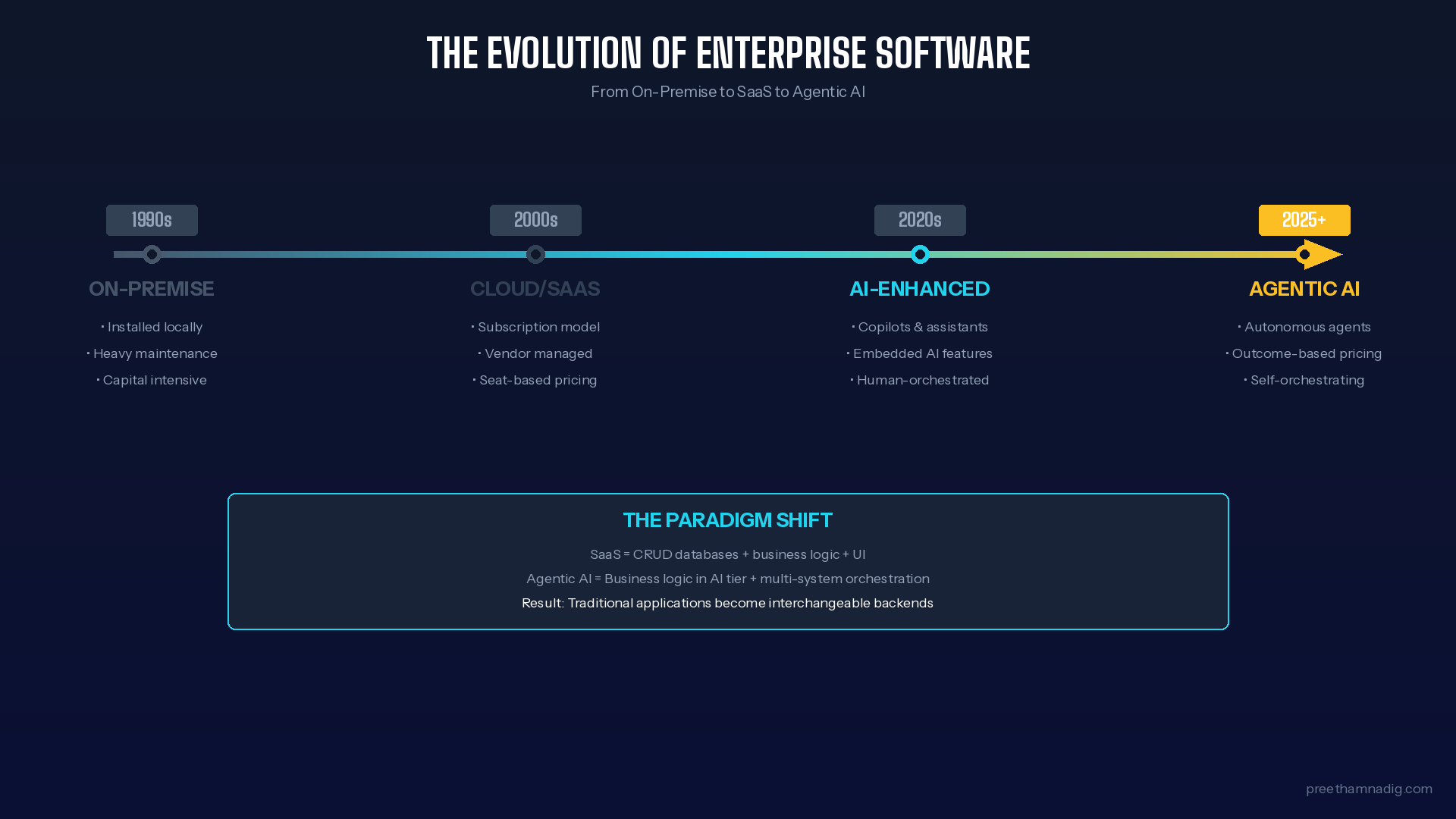

In my previous posts on the AI project landscape and guardrails for autonomous systems, I highlighted a critical consideration that deserves its own deep dive: data quality and quantity as the foundation for non-deterministic AI systems.

I’ve watched more AI initiatives stall at the data preparation stage than at any other point in the journey. Organizations get excited about predictive models, recommendation engines, and intelligent automation—then discover their data tells a different story than they expected.

This post is about reading that story before you write the algorithm.

The Data Readiness Paradox

Here’s the uncomfortable reality: the more sophisticated your AI ambitions, the more demanding your data requirements.

Deterministic systems—rule-based automation, RPA, scripted chatbots—can operate on structured, well-defined inputs. You know exactly what fields you need, in what format, from which systems. The data contract is explicit.

Non-deterministic systems flip this relationship. Machine learning models discover patterns in data, which means:

- The boundaries of what you can predict are set by what your data captures

- The accuracy of predictions depends on data quality you may never have measured

- The biases embedded in historical data become the biases of your model

You don’t just need data. You need data that can answer the questions you’re asking.

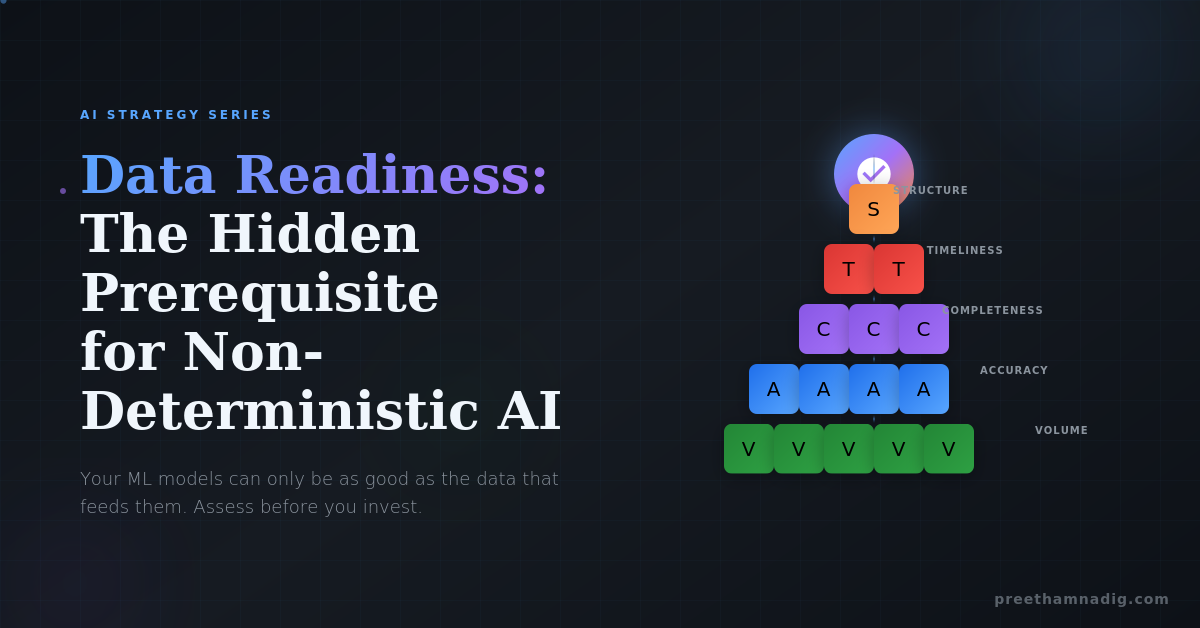

The Data Readiness Assessment Framework

Before launching any ML initiative, conduct a rigorous assessment across five dimensions. I call this the VACTS framework:

1. Volume: Do You Have Enough?

Machine learning is statistically hungry. The question isn’t “do we have data?” but “do we have enough data for the patterns to emerge?”

Key questions to ask:

- How many examples exist for each outcome you’re trying to predict?

- For classification problems, what’s the distribution across classes? (Imbalanced classes require special handling)

- How much data represents edge cases and exceptions?

- What’s the time span of your data—and does it capture full business cycles?

Rule of thumb: For tabular data, you typically need at least 10× as many training examples as you have features. For deep learning on unstructured data, multiply that by orders of magnitude. Rare events (fraud, churn, equipment failure) require even more data to model reliably.

Red flag: If someone says “we have millions of records,” ask how many represent the outcome you care about. A million transactions with 50 fraud cases won’t build a fraud model.

2. Accuracy: Can You Trust It?

Garbage in, garbage out isn’t just a cliché—it’s the fundamental law of machine learning.

Key questions to ask:

- What’s the error rate in manual data entry?

- How are missing values handled across systems?

- When was the last data quality audit?

- Are there known discrepancies between source systems?

- Who “owns” data quality for each critical field?

Assessment approach: Sample 500-1000 records and manually verify against source documents or ground truth. Calculate accuracy rates for fields that matter most to your model. Anything below 95% accuracy in critical fields should raise concerns.

Red flag: “We’ve never actually measured our data quality” is more common than you’d think—and more dangerous.

3. Completeness: What’s Missing?

The patterns hidden in your data may be less interesting than the patterns hidden in what’s not there.

Key questions to ask:

- What percentage of records have null or missing values for key fields?

- Is missingness random, or systematic? (Systematic gaps bias your model)

- What events or outcomes aren’t captured in current systems?

- Are there customer segments or time periods with sparse data?

Assessment approach: Create a missingness matrix showing what percentage of each field is null, broken down by relevant segments (customer type, time period, geography). Look for patterns. If high-value customers have more complete records, your model will be optimized for them—and unreliable for everyone else.

Red flag: If the data most critical to your use case is also the most frequently missing, you have an architecture problem, not just a quality problem.

4. Timeliness: Is It Fresh Enough?

Models trained on stale data make stale predictions.

Key questions to ask:

- What’s the latency between real-world events and their appearance in your data?

- How frequently is data refreshed in analytical systems?

- Are you training on point-in-time snapshots or current state?

- For time-series predictions, what’s the minimum prediction horizon your data supports?

Assessment approach: Map the data pipeline from source event to analytical availability. Identify bottlenecks. For predictive use cases, ensure you can access “as-of” data that reflects what was known at the time of prediction, not what you know now (to avoid look-ahead bias).

Red flag: Real-time prediction requirements with batch data refreshes create a gap where your model operates blind.

5. Structure: Is It ML-Ready?

Raw data and ML-ready data are rarely the same thing.

Key questions to ask:

- Is the data in a format your modeling tools can consume?

- Are categorical variables consistently encoded?

- Do you have clear definitions for each field?

- Can you join data across sources without ambiguity?

- Is there a documented data dictionary?

Assessment approach: Attempt a basic feature engineering exercise. Take your raw data and create the input features you’d need for a simple model. Every transformation you struggle with reveals a structural barrier.

Red flag: If it takes weeks to create a single analytical dataset, you’re not ready for iterative model development.

The Data Maturity Ladder

Not every organization needs perfect data to start with AI. But you need to honestly assess where you are and what that enables:

Level 1: Chaotic

- Data exists in silos with no integration

- No data quality standards or measurement

- Tribal knowledge required to interpret data

- What’s possible: Rule-based automation with heavy manual validation

Level 2: Reactive

- Some data integration exists

- Quality issues addressed when they cause problems

- Basic data dictionaries for key systems

- What’s possible: Descriptive analytics, simple predictive models with significant human oversight

Level 3: Proactive

- Centralized data platform (warehouse/lake)

- Regular data quality monitoring

- Documented data lineage

- Master data management for core entities

- What’s possible: Production ML models for well-defined use cases, human-enabled AI at scale

Level 4: Managed

- Data quality SLAs and accountability

- Automated data validation pipelines

- Feature stores for reusable ML inputs

- Data governance with clear ownership

- What’s possible: Autonomous AI systems with guardrails, complex multi-model architectures

Level 5: Optimized

- Data as a strategic asset with dedicated investment

- Self-service data discovery and preparation

- Continuous data quality improvement

- ML-ops for model lifecycle management

- What’s possible: Continuous learning systems, adaptive AI, full autonomy in appropriate domains

The honest truth: Most organizations I work with are somewhere between Level 2 and Level 3. That’s okay—but it sets realistic boundaries on AI ambitions.

The Pre-Flight Checklist

Before greenlighting any ML project, I recommend completing this data readiness checklist:

Strategic Alignment

- The business question is clearly defined

- Success metrics are agreed upon

- The outcome we’re predicting is actually captured in data

- Historical patterns are likely to continue (or we’ve accounted for change)

Data Availability

- We’ve identified all required data sources

- Data access has been secured (legal, technical, organizational)

- Sample data has been reviewed by data scientists

- Volume is sufficient for the modeling approach

Data Quality

- Accuracy has been measured for critical fields

- Completeness has been profiled

- Known quality issues have remediation plans

- Data owners are engaged and accountable

Technical Readiness

- Data can be extracted in required format

- Processing infrastructure is available

- Feature engineering is feasible within timelines

- Refresh frequency meets use case requirements

Governance

- Data use is compliant with privacy regulations

- Bias risks have been assessed

- Model interpretability requirements are clear

- Ongoing monitoring approach is defined

If you can’t check most of these boxes, you’re not ready for ML—you’re ready for data infrastructure investment.

The Hard Conversation

Here’s what I tell executives who are eager to move fast on AI:

The investment in data readiness isn’t a delay—it’s the foundation.

Every hour spent cleaning data, documenting sources, and building reliable pipelines pays dividends across every future ML initiative. Conversely, every shortcut taken on data quality compounds into model failures, eroded trust, and projects that never make it past pilot.

The organizations winning with AI aren’t the ones with the most sophisticated algorithms. They’re the ones who treated data as infrastructure, not an afterthought.

Your ML models will learn exactly what your data teaches them. Make sure it’s teaching the right lessons.

What’s Next

In my next post, I’ll explore Explainability vs. Autonomy: The Tradeoff Every AI Leader Must Navigate—examining how the “black box” problem I mentioned in the original framework shapes real-world deployment decisions.

Leave a Reply