When AI systems gain autonomy, your job shifts from controlling every step to defining the boundaries of acceptable outcomes. Here’s how to build the guardrails that let you sleep at night.

In my previous post on AI scalability, I mentioned a fundamental shift that happens as organizations mature their AI capabilities: you transition from managing processes to managing outcomes and guardrails.

That single sentence deserves its own exploration—because this shift is where most AI initiatives either graduate to true business impact or collapse under the weight of unintended consequences.

The Control Paradox

Here’s the uncomfortable truth about autonomous AI: the more capable it becomes, the less you can micromanage it.

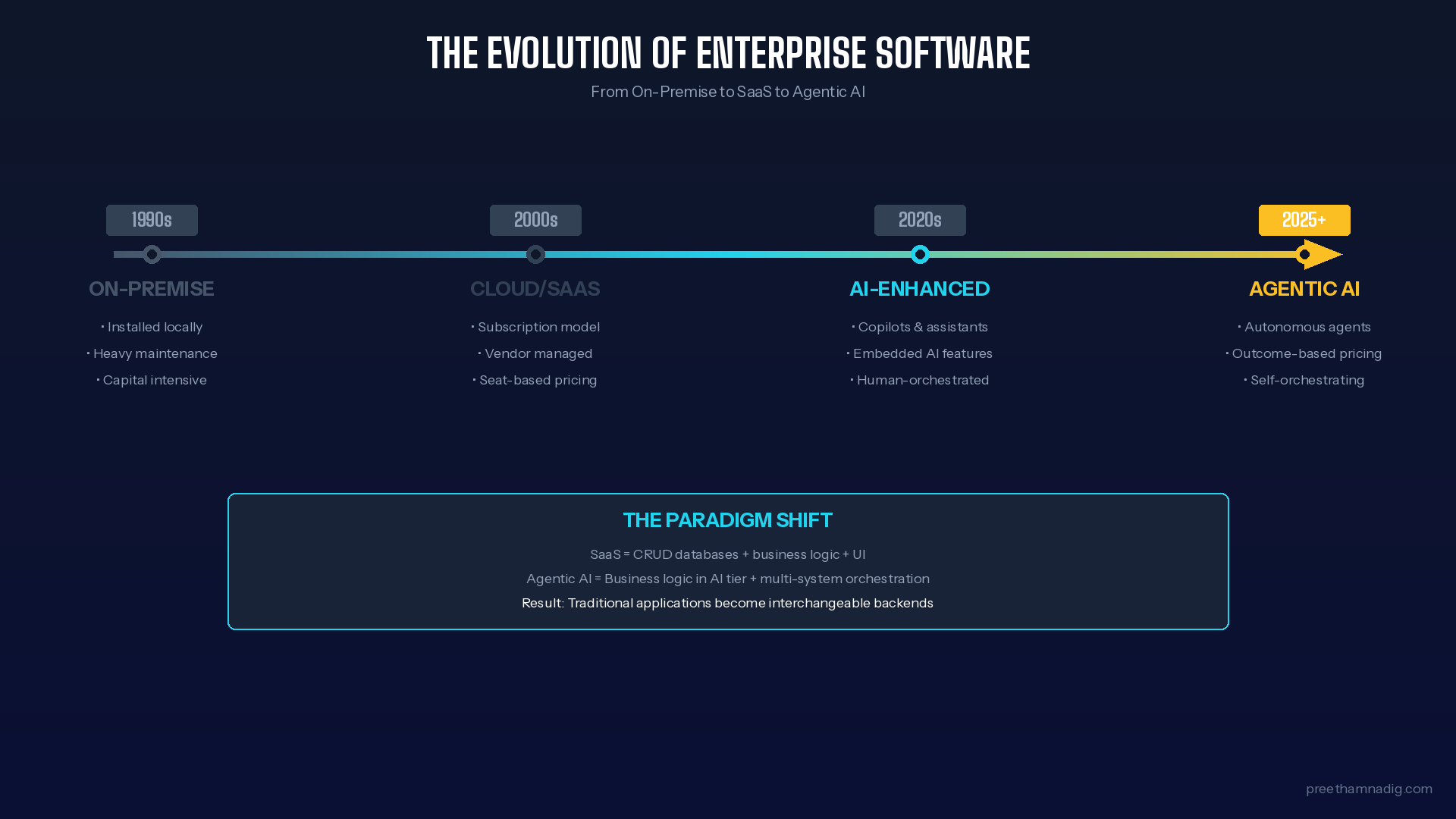

Traditional management works by controlling inputs and processes. You define the steps, train people to follow them, and measure compliance. The output is predictable because the path is prescribed.

AI autonomy breaks this model. A machine learning system processing thousands of decisions per minute can’t wait for human approval at each step. An LLM-powered agent navigating complex customer interactions will encounter scenarios you never anticipated. Attempting to script every possible response doesn’t just fail—it defeats the purpose of deploying AI in the first place.

The paradox: To capture the value of autonomous AI, you must relinquish control over the how while maintaining control over the what.

This is where guardrails come in.

What Guardrails Actually Are (And Aren’t)

Let’s clear up a common misconception: guardrails are not restrictions that limit AI capability. They’re boundaries that enable AI autonomy.

Think of guardrails like the lines on a highway. They don’t tell you which lane to drive in every second. They don’t dictate your speed through every curve. What they do is define the space within which you can operate freely—and create hard stops at the edges where the consequences of failure become unacceptable.

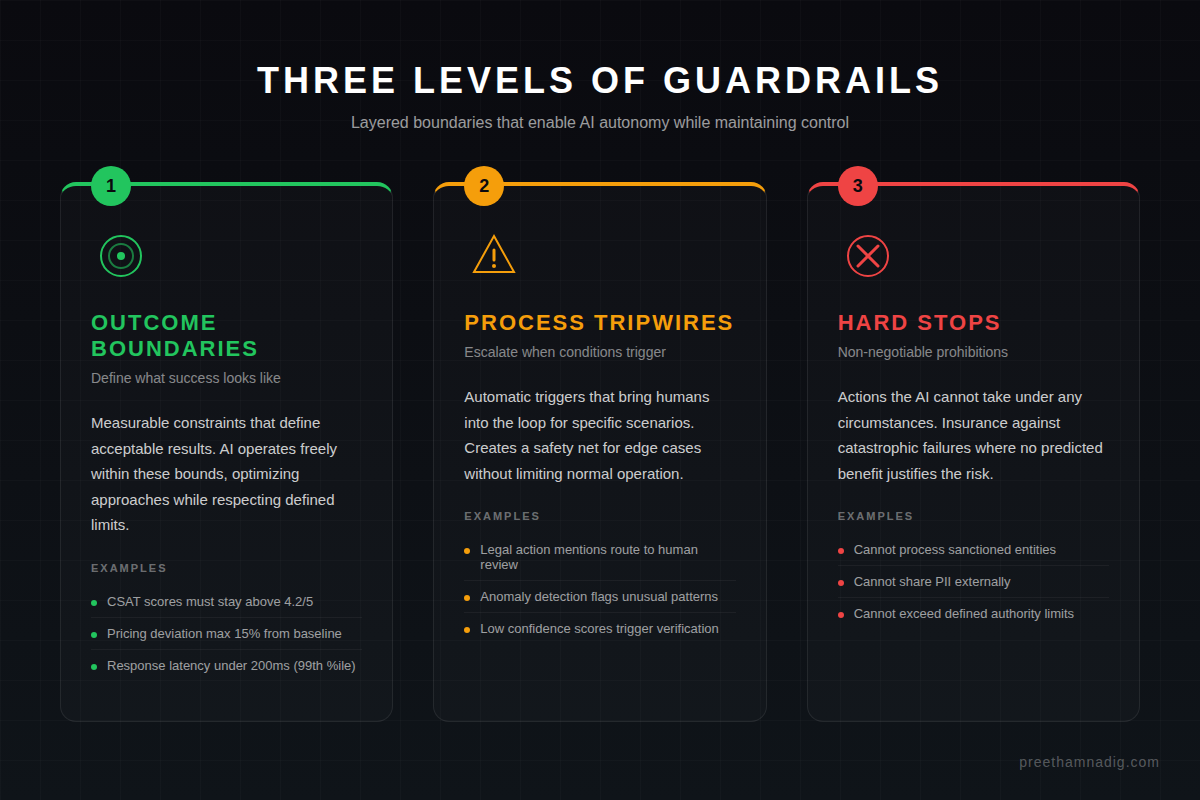

Guardrails operate at three levels:

1. Outcome Boundaries

These define what “success” and “failure” look like in measurable terms. Not how the AI should behave, but what results are acceptable.

Examples:

- Customer satisfaction scores must stay above 4.2/5

- Pricing recommendations cannot deviate more than 15% from baseline without escalation

- Response latency must remain under 200ms for 99% of requests

- No single customer interaction should exceed $50,000 in authorized commitments

Outcome boundaries give AI systems freedom to optimize within defined constraints. The system can experiment with approaches, learn from results, and improve—as long as it stays within bounds.

2. Process Tripwires

These are triggers that escalate to human oversight when certain conditions are met. They don’t stop the AI; they bring humans into the loop for specific scenarios.

Examples:

- Any interaction mentioning legal action routes to human review

- Anomaly detection flags unusual patterns for investigation

- Confidence scores below threshold trigger human verification

- Customer sentiment drops trigger real-time monitoring

Tripwires acknowledge that AI systems will encounter edge cases beyond their training. Rather than trying to anticipate every scenario (impossible) or restricting autonomy to prevent surprises (limiting), tripwires create a safety net that catches the cases that matter most.

3. Hard Stops

These are non-negotiable prohibitions—actions the AI system cannot take under any circumstances, regardless of predicted outcomes.

Examples:

- Cannot process transactions for sanctioned entities

- Cannot share personally identifiable information externally

- Cannot make commitments beyond defined authority limits

- Cannot override safety-critical system parameters

Hard stops are your insurance policy against catastrophic failures. They exist for scenarios where no predicted benefit justifies the risk of getting it wrong.

The Shift in Management Thinking

Moving from process management to guardrail management requires a genuine mindset shift. Here’s what changes:

From “Did they follow the steps?” to “Did we get the right outcome?”

In process management, success means compliance. Did the employee follow the procedure? Did the system execute the workflow correctly?

In outcome management, success means results. Did the customer get what they needed? Did the business objective advance? The path matters only insofar as it affects the destination.

Implication: You need robust outcome measurement. If you can’t measure it, you can’t manage it through guardrails.

From “Anticipate every scenario” to “Define the unacceptable”

Traditional process design tries to account for every possibility. Guardrail design inverts this: you define what must not happen and what must be achieved, then let the system figure out the space between.

Implication: You need to get very clear on your risk tolerance. What failures are recoverable? What failures are catastrophic? Where’s the line?

From “Approve before action” to “Monitor and intervene”

Human-in-the-loop becomes human-on-the-loop. Instead of reviewing every decision before execution, you observe patterns and step in when necessary.

Implication: You need real-time visibility into system behavior. Dashboards, alerts, and audit trails become critical infrastructure.

From “Blame the operator” to “Fix the system”

When an autonomous system produces a bad outcome, the relevant question isn’t “who approved this?” It’s “why did our guardrails allow this?”

Implication: Guardrails must be continuously refined based on observed failures. Every incident is a signal to improve the boundaries.

Designing Effective Guardrails: A Framework

Here’s a practical framework I use when helping organizations implement guardrails for autonomous AI systems:

Step 1: Define Your Outcome Objectives

Start with the business outcomes you’re trying to achieve. Be specific and measurable.

- What does success look like in quantifiable terms?

- What are the leading indicators that predict success?

- How quickly do you need to detect deviation from expected outcomes?

Step 2: Map Your Risk Landscape

Identify what can go wrong and categorize by severity and likelihood.

- Catastrophic risks: Low probability, extreme impact. These become hard stops.

- Significant risks: Medium probability, high impact. These need tripwires and escalation paths.

- Operational risks: Higher probability, manageable impact. These get outcome boundaries with monitoring.

Step 3: Design Layered Defenses

Effective guardrails operate in layers. No single control catches everything.

- Pre-execution checks: Validate inputs and contexts before action

- In-execution monitoring: Track behavior in real-time during operation

- Post-execution analysis: Review outcomes and patterns after completion

- Periodic audits: Deep-dive reviews on regular intervals

Step 4: Build Feedback Loops

Guardrails should improve over time based on real-world performance.

- Track guardrail activations (how often are boundaries hit?)

- Analyze false positives (are guardrails too restrictive?)

- Investigate misses (what got through that shouldn’t have?)

- Update boundaries based on evidence

Step 5: Establish Governance

Define who owns what and how decisions get made.

- Who sets guardrail parameters?

- Who reviews guardrail effectiveness?

- Who has authority to override guardrails in emergencies?

- How are guardrail changes tested and deployed?

The Human Element

Here’s something I want to be explicit about: guardrails don’t eliminate the need for human judgment. They focus on it.

In a guardrail-managed system, humans shift from routine decision-making to:

- Setting the boundaries: Defining what outcomes matter and where the limits lie

- Handling escalations: Making judgment calls in ambiguous situations

- Improving the system: Refining guardrails based on observed performance

- Maintaining accountability: Owning the outcomes even when AI executes the actions

This is higher-value work. It requires strategic thinking rather than task execution. For many organizations, this shift represents both an opportunity and a challenge—it demands different skills from the humans in the loop.

Common Pitfalls to Avoid

As organizations implement guardrails, I see several recurring mistakes:

Guardrails as Afterthoughts

Bolting guardrails onto an already-deployed system is harder and riskier than designing them in from the start. Build guardrails into your architecture, not around it.

Over-Constraining

If your guardrails are so tight that they prevent the AI from doing anything useful, you haven’t enabled autonomy—you’ve just automated your existing process with extra steps. The goal is appropriate constraint, not maximum constraint.

Under-Monitoring

Guardrails without monitoring are theater. If you can’t see when boundaries are approached or breached, you can’t respond and you can’t improve.

Static Boundaries

Business conditions change. Regulations evolve. Customer expectations shift. Guardrails that made sense six months ago may be too loose or too tight today. Build in regular review cycles.

Accountability Gaps

When something goes wrong, there must be clear ownership. “The AI did it” is not an acceptable answer. Someone owns the guardrails, and that someone owns the outcomes.

The Competitive Advantage

Organizations that master guardrail-based management gain a significant competitive advantage. They can:

- Deploy AI faster: Without needing to anticipate every scenario upfront

- Scale AI broader: Extending autonomy to more domains with confidence

- Iterate AI continuously: Improving systems based on real-world feedback

- Trust AI deeper: Enabling genuinely autonomous operation where appropriate

Meanwhile, organizations stuck in process-management mode either move too slowly (trying to script everything) or too recklessly (deploying without appropriate controls). Neither approach wins.

Where to Start

If you’re ready to shift toward guardrail-based management, here’s my recommended starting point:

Pick one autonomous process where you currently have heavy human oversight. Then:

- Define three outcome metrics that would indicate success

- Identify your single biggest catastrophic risk (make this a hard stop)

- Design two tripwires for scenarios that need human judgment

- Build a monitoring dashboard that shows outcome metrics and guardrail status

- Run for 30 days, then refine based on what you learn

This focused pilot will teach you more about guardrail design than any theoretical exercise. And it will build the organizational muscle for scaling the approach.

Final Thought

The shift from managing processes to managing outcomes isn’t just a tactical change—it’s a philosophical one. It requires trusting systems to find their own path while defining the boundaries they must respect.

This is the essence of the guardrails imperative: not less control, but smarter control. Not blind trust in AI, but structured autonomy with accountability.

The organizations that figure this out will define the next era of AI-powered business. The ones that don’t will either move too slowly to compete or move too fast to survive.

The wheel is passing to AI. The question is whether you’ve built the guardrails to keep it on the road.

Leave a Reply